Last year, tech companies reported over 45 million online photos and videos of children being sexually abused — more than double what they found the previous year.

Online predators create and share the illegal material, which is increasingly cloaked by technology. Tech companies, the government and the authorities are no match.

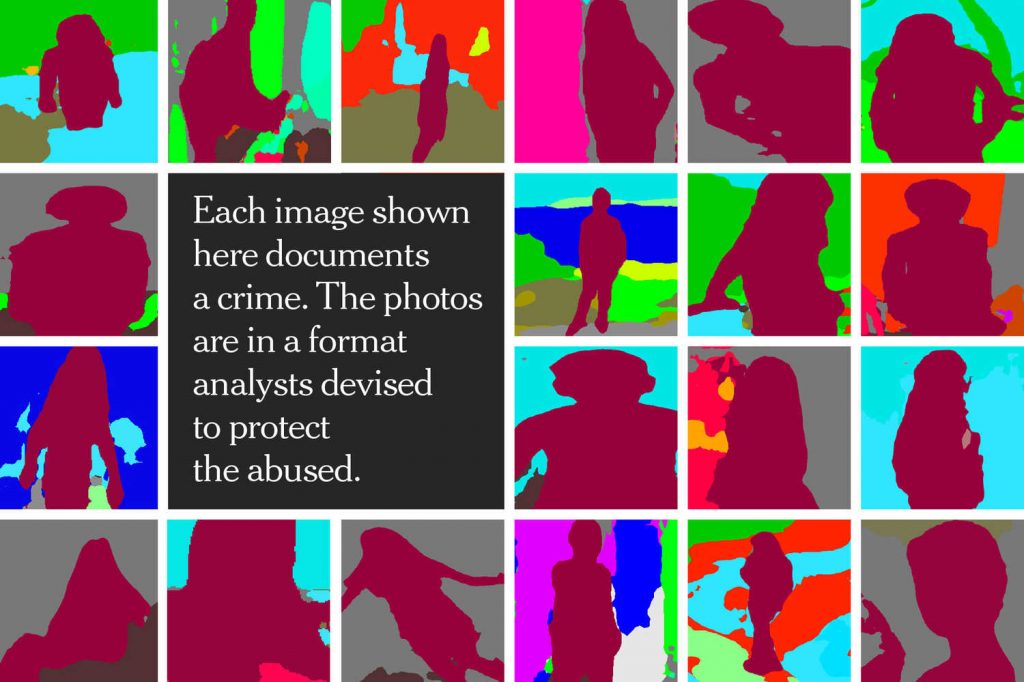

The images are horrific. Children, some just 3 or 4 years old, being sexually abused and in some cases tortured.

Pictures of child sexual abuse have long been produced and shared to satisfy twisted adult obsessions. But it has never been like this: Technology companies reported a record 45 million online photos and videos of the abuse last year.

More than a decade ago, when the reported number was less than a million, the proliferation of the explicit imagery had already reached a crisis point. Tech companies, law enforcement agencies and legislators in Washington responded, committing to new measures meant to rein in the scourge. Landmark legislation passed in 2008.

Yet the explosion in detected content kept growing — exponentially.

An investigation by The New York Times found an insatiable criminal underworld that had exploited the flawed and insufficient efforts to contain it. As with hate speech and terrorist propaganda, many tech companies failed to adequately police sexual abuse imagery on their platforms, or failed to cooperate sufficiently with the authorities when they found it.

Law enforcement agencies devoted to the problem were left understaffed and underfunded, even as they were asked to handle far larger caseloads.

The Justice Department, given a major role by Congress, neglected even to write mandatory monitoring reports, nor did it appoint a senior executive-level official to lead a crackdown. And the group tasked with serving as a federal clearinghouse for the imagery — the go-between for the tech companies and the authorities — was ill equipped for the expanding demands.

A paper recently published in conjunction with that group, the National Center for Missing and Exploited Children, described a system at “a breaking point,” with reports of abusive images “exceeding the capabilities of independent clearinghouses and law enforcement to take action.” It suggested that future advancements in machine learning might be the only way to catch up with the criminals.

Recent Comments